.png)

Artificial intelligence is transforming how we work, communicate, learn, and create. It operates with increasing autonomy across both the physical and digital realms, promising to augment our personal and professional lives. But as AI systems become increasingly complex and widespread, something critical is breaking down: trust.

For decades, trust has been a foundational assumption of the digital world. We trusted the evidence of our own eyes and ears. We trusted the security of the systems we used. We trusted that organizations had control over their digital environments.

AI is challenging each of these assumptions simultaneously. And while digital interactions have always involved some level of risk, AI is amplifying uncertainty at scale. The result is a growing sense that what we see, hear, and rely on may no longer be trustworthy.

In expanding what’s possible, AI is also redefining what’s believable.

Shadow AI and trust at the organizational level

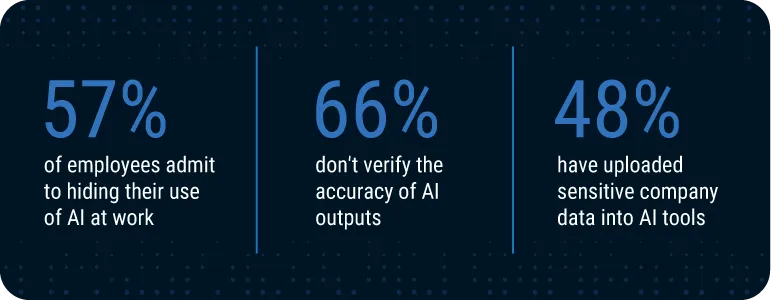

Enterprises are adopting AI at speed, often driven by competitive pressure, productivity gains, and executive mandates. But AI governance, visibility, and security are struggling to keep up. The result is an internal trust gap.

One of the clearest indicators is the rise of shadow AI. It's now present in more than 90% of organizations as employees increasingly rely on unapproved AI tools.

Source: Business Insider

The use of shadow AI is rarely malicious. Most employees are just trying to work faster and automate repetitive tasks. But unsanctioned AI use reduces organizational control over data, processing, and reuse. Organizations are losing visibility into where data goes and how it gets used.

AI also complicates accountability. It’s often unclear which system made a decision or who’s responsible for it. This lack of transparency introduces AI security and compliance risk. But restrictive governance frameworks aren’t always effective; employees find clever ways to work around them.

This dynamic undermines internal trust: the confidence employees, leaders, and partners have in the systems that power the business. It also compounds the external mistrust of consumers when they do business with these organizations.

AI is causing consumer trust to decline

AI-driven threats are scaling faster than the human ability to detect them.

Deepfake technology is one clear example: The number of deepfake files has exploded from roughly 500,000 in 2023 to more than 8 million in 2025. And fraud attempts tied to AI have surged by as much as 3,000% in a single year.

Source: Business Insider

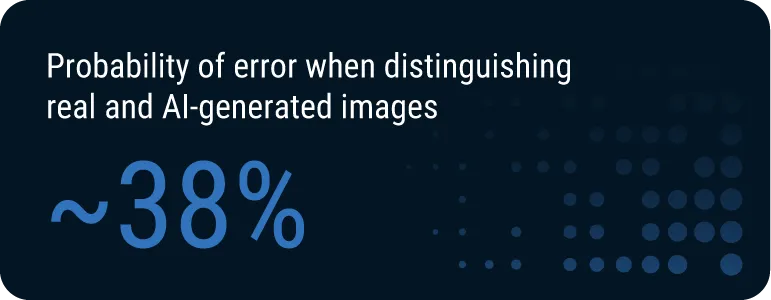

At the same time, deepfake quality has improved to a point where humans can no longer reliably identify what’s real and what’s not. In controlled studies, people correctly identified AI-generated images around 62% of the time, only slightly better than leaving it up to pure chance.

This uncertainty also extends beyond visual media. Just 40% of individual consumers consider AI a trustworthy source of information. A staggering 70% don't trust companies to use AI responsibly with their data.

This is the defining tension in the age of artificial intelligence: As generative AI adoption explodes, trust breaks down.

3 major barriers to achieving AI trust

If organizations want to restore internal and external trust, they must find a way to meet today’s new AI trust challenges head-on. That requires addressing three key issues:

AI agents are operating without the appropriate guardrails: Agents can execute tasks, access systems, and make decisions, often without clear identity, accountability, or governance. Even organizations that know agents are operating in their environments may lack full visibility into their actions or clear accountability when something goes wrong.

Models are exposing new security gaps: AI models are revolutionizing things like medical diagnostics and complex predictive analytics. But models are at real risk of tampering, misuse, or data leakage. Model makers worry about their proprietary IP, while model users are rightly concerned with data security. And on both sides, there are real risks if the model loses accuracy.

Content can no longer be assumed authentic: Synthetic media is now easy to create, cheap to produce, and difficult to detect, making content authenticity harder to establish in everything from news to social media to corporate communications.

Each of these issues expands what we call the “trust surface,” the total area where trust must be established or maintained. And this expanding surface requires a new approach.

Intelligent trust in the age of AI

Traditional approaches to trust were designed for a simpler digital world. In that world, software behaved deterministically, systems operated within clearly defined perimeters, and content was generated by humans.

In a world where AI breaks these assumptions, trust can no longer be assumed; it must be verifiable and provable.

That’s why DigiCert launched the AI Trust initiative. Our aim is to build on our long-standing foundation in digital trust, extending proven cryptographic principles into the AI ecosystem to verifiably secure AI systems and the content they produce.

To that end, we're focusing on three key areas.

1. Trust in AI agents: Governing the autonomous workforce

AI agents represent a new class of digital actor. They operate with limited supervision and at massive scale, but most organizations lack:

- Clear visibility into agent activity

- Strong identity for agents

- Fine-grained control over agent behavior

- Auditability and unambiguous human responsibility

DigiCert aims to address these shortcomings by providing:

- Cryptographic identity for agents

- Policy-bound authorization

- Continuous validation and auditability

- Agent governance and lifecycle management

This transforms agents from opaque processes into accountable, governed entities.

2. Trust in AI models: Securing the decision layer

AI models are now critical infrastructure. They power decisions that impact finance, healthcare, compliance, and more. But without integrity controls, they introduce new risks:

- Stolen IP and eroded competitive advantage for model makers

- Data exposure and regulatory non-compliance

- Tampering and model drift resulting in inaccurate outcomes

- Poorly secured execution environments

DigiCert brings cryptographic assurance to the model lifecycle, offering:

- Verifiable model provenance

- Ongoing integrity validation

- Secure distribution and execution in trusted environments

- Model lifecycle controls

With DigiCert’s intelligent trust framework, models become as trustworthy as they are powerful.

3. Trust in content: Dispelling doubt with verified authenticity

In a world of synthetic media, people can no longer rely on what they see. Images and video may originate from a camera or be generated entirely by AI. As a result, audiences can’t easily answer basic questions:

- Is the original content real or synthetically generated?

- Has this content been manipulated after its initial creation?

- Can the source showing me the content be trusted?

Standards like C2PA seek to clear up this uncertainty. Content signed under this standard carries cryptographically signed metadata that travels with it, allowing anyone to independently verify its authenticity. With its C2PA-compliant Content Trust solution, DigiCert can provide a trusted source of truth that shows:

- How content was generated

- The time and date of content creation

- Whether and how content has been modified

By adhering to the rigorous C2PA standard, DigiCert restores trust in digital content by offering the transparency audiences require, confirmed and verified by a trusted, neutral third party.

Trust as a competitive advantage

As shadow AI snowballs and consumer trust continues to break down, organizations that fail to address AI trust will face operational, financial, regulatory, and reputational risks.

But organizations that solve for trust will gain a competitive edge.

They’ll be able to scale AI faster and reap its operational benefits without falling victim to the new security risks it brings. They’ll nurture stronger consumer and employee confidence. And they’ll be well prepared to conquer compliance challenges.

In short, trust-forward organizations will operate with the confidence to scale AI without introducing new risk.

How DigiCert is helping restore trust

AI will continue to evolve. And trust will define its trajectory.

DigiCert has spent over two decades building the foundation of digital trust. That foundation now extends into the AI ecosystem through an intelligent trust approach, applying identity, integrity, accountability, and cryptographic verification across agents, models, and content.

Explore our AI Trust solution to see how DigiCert can help you move from uncertainty to reassurance by building a foundation of trust strong enough for the AI era.